Bibliometrics in press or the representation of indicators in the Italian news

The use of bibliometric indicators such as the h-index or the journal impact factor is not limited to academia: from time to time, they make it into the news as well. Our author studied Italian news articles and found that they are used in quite different contexts and cover a variety of functions.

In spring 2020, Italy, like other countries in Europe and around the world, was in the midst of the first wave of the Covid-19 pandemic. Lockdown measures were in place in the entire country in the attempt to limit the spread of the virus. In these hard times, Italian public opinion was exposed to hitherto specialistic notions of epidemiology such as exponential growth, basic reproduction number, respiratory droplets and so on. Experts from medicine and other scientific fields had rapidly acquired a new centrality in the media and in government. A scientific-technical committee was established to advise the government, while medical doctors and scientists were routinely interviewed in newspapers and invited in talk shows.

In this context, on May 2 the newspaper Il Tempo published an article bearing the title: “The poorest experts in the world: Burioni, Pregliasco and Brusaferro”.[1] In this article, the scientific reliability of several experts taking part in the scientific-technical committee or appearing in the media was gauged using the most (in)famous bibliometric indicator, the h-index. Experts were ranked and licenses of expertise attributed or discarded based on h-index scores. The journalist explained:

The frankly crude use of the h-index made in the article attracted much criticism from the Italian scientific community (see here and here, sources in Italian). On my part, I was deeply impressed by how a specialized notion from bibliometrics percolated in the generalist press and was mobilized in public debates on scientific authority and trustworthiness of experts. I was aware that bibliometricians and scholars in STS were increasingly revealing the performative nature of bibliometric indicators: far from being neutral measures, these statistical constructs shape the behavior of scientists and deeply intrude into the epistemic structure of the sciences themselves. However, this kind of research had so far mainly focused on intra-scientific contexts and practices, with little attention for extra-scientific arenas. Yet, the article mentioned above seemed to me a clear example of how academic/scientific actors are not alone in generating the social representation of bibliometric indicators: a complete description of the processes of negotiating meaning should encompass further actors, such as journalists, and further arenas, such as the press.

I then started a research project aiming at investigating the representations and uses of bibliometrics in the press, with the aim of developing the previous classifications of relevant actors. I decided to focus on the Italian press for two reasons. First, Italy’s research evaluation system is heavily based on bibliometric indicators. This system was introduced in 2010 as part of a vast reform of the country’s university management, which was heavily contested by the Italian academic community. Second, Italy lacks a strong indigenous community of bibliometrics experts. In this sense, no community could claim an epistemic control of the social discourse on bibliometrics in the country. These factors created the conditions for newspapers to become a key arena for the discussion of bibliometrics and bibliometric indicators and, hence, a perfect viewpoint to observe the collective construction of their social representation.

Using the online archives of four major Italian newspapers, I retrieved a corpus of 583 articles, published between 1990 and 2020, that mentioned the Journal Impact Factor, the h-index, or other bibliometrics-related terms. In this blog post I cannot go into the details of this very rich material.[2] I will try, however, to highlight what I deem to be the three most interesting findings and suggest some ideas for further research.

Indicators in the press between meritocracy, science news, and rankings

The first result is that the Impact Factor (IF, in the following) started to appear in the Italian press in news about scandals in competitions for university chairs. In the early 1990s, it become common practice to sum the IF of the journals in which scientists published, obtaining an IF-based metric of individual researchers. In this way, candidates rejected in competitions had at their disposal a new, easily interpretable metric to compare their scientific performance with that of the winners and, thus, could reclaim justice. In this sense, the IF started its career in the Italian press as a “justice device” to promote meritocracy in academic recruitment. The following quote is representative of the general tone of the news denouncing scandals:

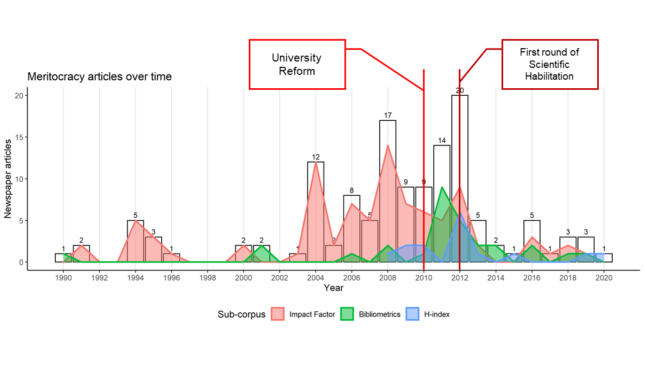

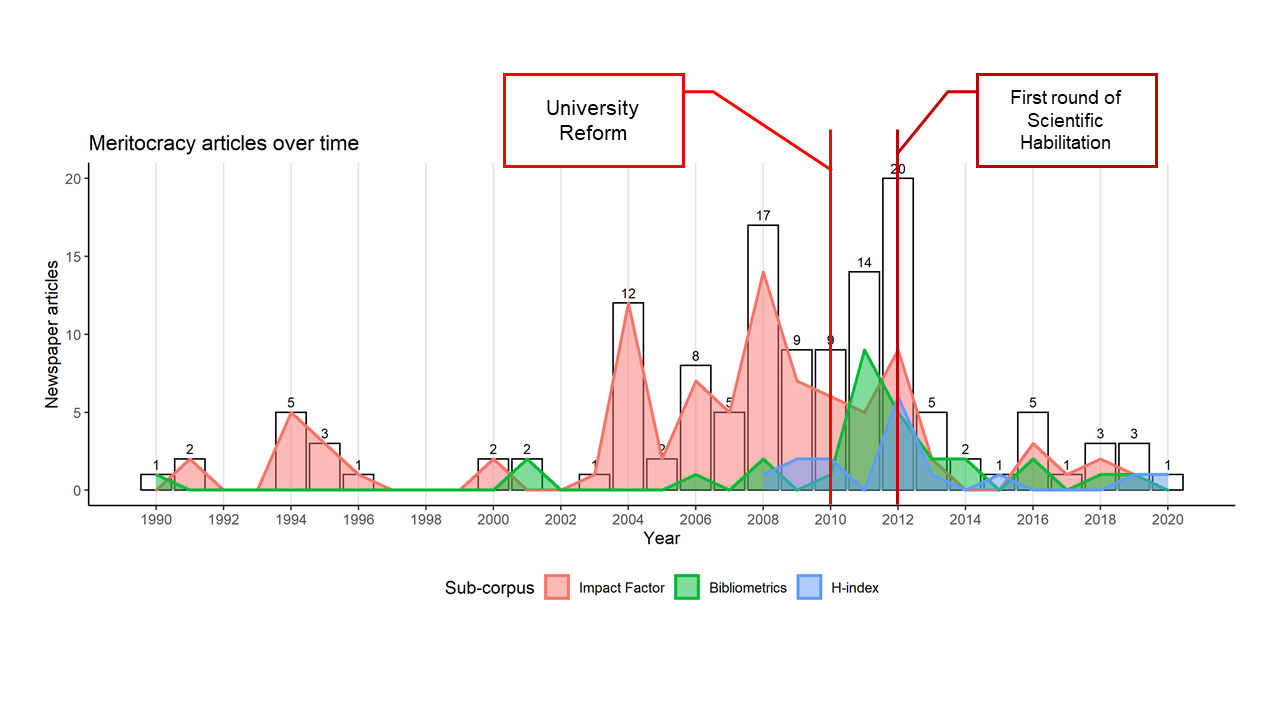

The rhetorical use of indicators as objective measures that can fix the perceived endemic clientelism of Italian academy grows over the years and reaches its highest intensity in the years before the implementation of the 2010 university reform (Figure 1).

This shows that indicators were integrated into a meritocracy-centered narrative frame long before they were officially enrolled in the Italian research evaluation system.

The second finding, which is particularly relevant for understanding how the press contributes to the social construction of scientific facts, is that the Impact Factor is frequently used by journalists as a “quality seal” for science news. The IF is presented as a warrant of the scientific reliability of the venue of publication, and hence, of the credibility or relevance of the science news reported:

Note that no article where the IF plays this function mentions the limitations of the indicator, that hence is presented as a completely “transparent” quality seal, easily interpretable and uncontested.

Interestingly, the IF can be mobilized also to deconstruct the validity of research in the news. For instance, the reliability of a study allegedly showing the efficacy of homeopathy is contested based on the IF factor of the publishing journal, observing that this was “very low” compared to that of serious scientific journals such as Nature.

The third interesting result concerns the role of amateur bibliometrics in the press, that is bibliometrics produced by nonprofessional bibliometricians. The h-index arrives in the Italian press in 2008, just three years after its creation by Jorge Hirsch. The “vector” of the indicator was a ranking of Italian scientists known as “Top Italian Scientists” (TIS), published online by the association Virtual Italian Academy. In 2010 and 2011, most of the articles that mentioned the indicator were in fact about the TIS. This ranking offered journalists a ranking of individual scientists that nicely complemented rankings of universities that started popping up in the press in the same years. However, it was the result of a private initiative without institutional support. Again, about one out of three of the news about the TIS ranking lack any definition of the indicator and less than the half report its limitations.

These three findings show that bibliometric indicators in the press occur in different contexts, play a wide range of functions, and are integrated into different narrative frames. They appear in debates on academic recruitment, but also in the communication of scientific discoveries to the public. They can be used to claim justice but also to satisfy the hunger for rankings and measuring “excellence”.

Next steps

The next, natural step in the investigation of bibliometrics in the press is to understand how the social meaning of indicators is constructed in the press of other countries and in other media or press types. It has for instance been suggested to me that Dutch journalists represent the IF differently from their Italian colleagues, using it as a “shorthand” for any bibliometric statistics. In this research, I analyzed the generalist press, but there is also a specialist press, such as Times Higher Education, or specialist blogs, such as Leiden Madtrics and ROARS (Return on Academic Research), in which the representation of indicators may follow different logics. Bibliometrics in press has still lots to tell.

Photo credits header image: Ludovica Dri

0 Comments

Add a comment